Compute as a Commodity

A Framework for When GPU Futures Markets Will Emerge

At Variant, we love thinking about new markets. Emergent asset classes and financial products, new asset issuance, expanded market access, and novel ways to participate are in our founding DNA.

Recently, we’ve been thinking about markets around compute. Access to compute is a large and growing category and one that’s arguably primed for greater financialization. But compute supply/demand dynamics are highly complex, opaque, and constantly evolving. Open questions remain around market timing, structure, and even what asset is being traded.

As we debate and discuss these questions, we want to share a window into our emerging framework for thinking about compute markets.

Five conditions tend to precede the emergence of a new futures market:

- Supply-side fragmentation

- Persistent price volatility

- Some form of physical settlement infrastructure

- A standardized, tradable unit

- The absence of substitutes for price discovery or hedging

Our framework contextualizes the current landscape for compute markets within each of these five vectors. We use historical analogs to explain why each vector matters and to predict when a market will hit escape velocity.

TL;DR

A quick run-through of the framework shows that today’s market for compute lacks the maturity to sustain a robust futures market. (That said, the market is incredibly dynamic, and many startups are actively working to change this; if that’s you, please reach out!)

This is how we currently score compute futures markets on each of the five vectors:

- Supply-side fragmentation: 🔴 Supply is heavily concentrated by hyperscalers.

- Price volatility: 🟢 GPU prices are highly volatile.

- Physical settlement infrastructure: 🟢 Physical settlement infrastructure exists at the OTC broker level.

- Standardization: 🔴 There is no standardized, tradeable unit for compute.

- Absence of substitutes: 🟡 Vertically integrated providers can hedge internally while the rest of the market is forced to be long only.

Supply-side fragmentation (Compute Score: 🔴)

Futures markets are mechanisms for price discovery. Monopolistic conditions on the supply side remove the need for price discovery because prices are set by a small number of large suppliers (thus eliminating any pricing uncertainty).

We’ve seen this consistently throughout history. Oil futures gained strength only after supply-side cartels (e.g., the Seven Sisters) weakened. Electricity markets formed after government deregulation stripped monopoly pricing and enabled independent producers to enter the market. Supply-side fragmentation helped propel futures markets as important venues for price discovery.

When we look at compute dynamics today, the supply side appears relatively concentrated. The top four hyperscalers control roughly 78% of global self-built critical IT power capacity and roughly 69% of the H100 supply (assuming 12.4m H100s in Q4 2025). This leads us to believe they also dominate global compute time capacity. Supply is not fragmented.

On our minds, though, is what might change that dynamic. More neoclouds are emerging. New chip architectures create opportunities for other vendors to win market share. Some of the longer-term contracted capacity from the major labs may end up underutilized, meaning those labs could eventually become compute suppliers/sellers in the market.

So, while we are unsure about the future degree of concentration, our current hypothesis is that directionally the supply side of the market will become more fragmented than it is today.

Price volatility (Compute Score: 🟢)

Another precondition for a futures market is that the asset in question is highly volatile. Without meaningful price uncertainty, a hedger has little incentive to insure against volatility. Volatility also attracts speculators, who profit from big price swings. If the market is flat or predictable, speculators turn their heads towards other markets.

We saw this happen in oil in the 1950s when the Soviet Union, in reaction to an oil glut, listed prices below the Seven Sisters’ posted price. In response, the Sisters lowered prices in the Middle East without notifying oil producers in the region. The cascading shock led to the nationalization of oil in the Middle East, the formation of OPEC, and increased levels of uncertainty around oil prices worldwide. Oil volatility subsequently caused volatility in electricity in the 1970s.

Compute pricing is and will continue to be volatile. The speed at which new supply comes to market is uncertain. New chip or data center architectures may improve token efficiency for specific tasks. Demand continues to proliferate (and in ways that are impossible to forecast).

We have high confidence that this precondition is in place today.

Physical settlement infrastructure (Compute Score: 🟢)

For a market to function efficiently, purchasers must trust they can receive (and consume) the instrument in question at the stated date and time. That requires infrastructure: mechanisms to aggregate supply, ensure reliable delivery, clear trades, handle collateral, and manage settlement. These jobs are often performed by intermediaries or brokers.

In the context of electricity markets, these tasks are performed by independent system operators, neutral third parties that operate as quasi-governmental entities. There’s no apples-to-apples equivalent yet in compute markets, but our hypothesis is that compute brokers/OTC desks are beginning to (and will increasingly) assume many of the above roles.

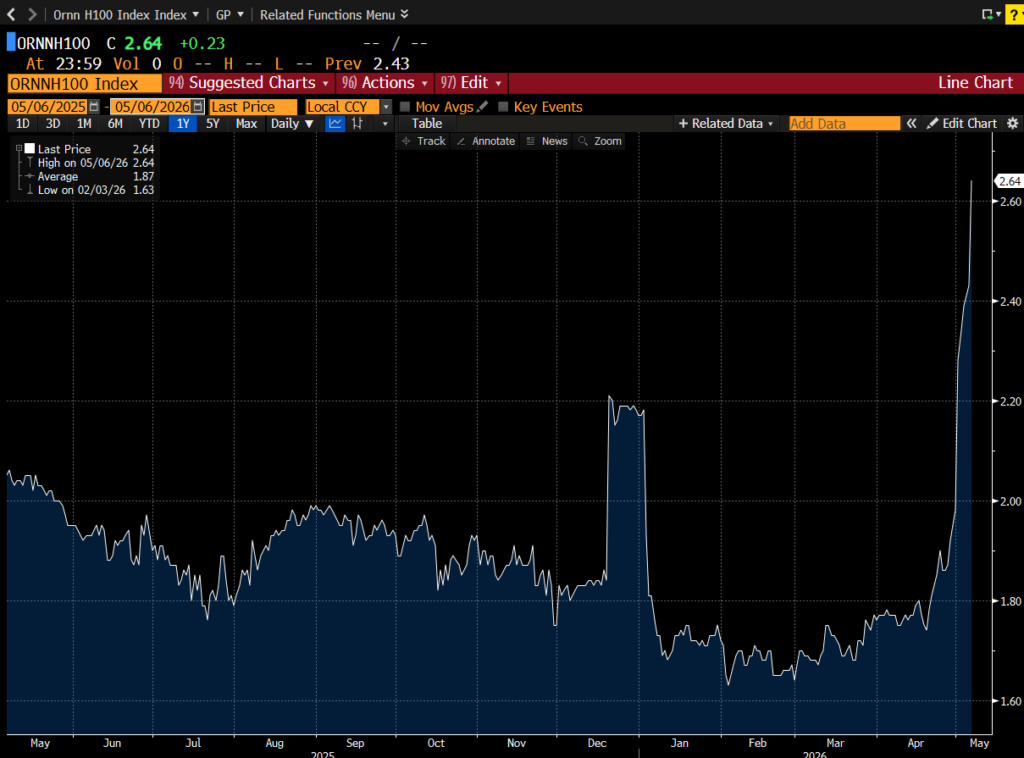

Today, brokers are forming indices and data aggregation tools around compute purchase and rental agreements to nail down market prices. Ornn and Silicon Data are already publishing price feeds for data-center-grade GPUs. Broker groups are also forming consensus around contract agreements, similar to how the SAFE standardized early-stage funding agreements. These tools improve the underlying physical settlement infrastructure, which is largely coordinated in group chats today.

We give physical settlement infrastructure a green score because it establishes a baseline for price discovery. However, it is imperfect compared to what a true spot market looks like at maturity. These purchases are at the infrastructure level, and not every market participant has the right to openly resell after purchase. We are closely monitoring developments in new market creation at this level.

Standardization (Compute score: 🔴)

A major challenge with new commodities is often the degree to which the units are distinct and non-fungible. An abundance of variables can fragment liquidity across many markets and/or lead to basis risk that’s too high for most hedging and delivery needs.

Crude oil, for example, is measured by levels of density and sulfur content, which vary by production region. NYMEX found product-market fit with its WTI (light and sweet) index because it narrowed on a standard that was serviceable to the global upstream market and is even used to hedge in downstream markets such as airlines. Electricity, meanwhile, is standardized by region, accounting for supply/demand waves that differ based on temperature, population density, and other factors.

The compute market lacks a level of standardization adequate for general hedging needs. The challenge is that one H100 instance doesn’t always equal another H100 instance. Factors like region (and local electricity inputs), chassis (e.g., hardware and networking components), and tenor (i.e., contract duration) add variability across GPU-instance pricing.

That said, we’re seeing early indicators of standardization, particularly when demand comes from long-tail (e.g., non-frontier lab) inference. Inference workloads, as opposed to training, require much less nuance and can function in a distributed context instead of a colocated one. If inference supply fragments across many providers, such as if open weights increase market share, standardization may emerge.

Absence of substitutes (Compute Score: 🟡)

This is a subtle yet overlooked point in market formation. Futures markets were made to serve hedgers. If substitutes exist with adequate liquidity and negligible basis risk, alternative contracts will see no adoption.

A textbook example is the lack of adoption for jet fuel futures because demand is sufficiently met by the WTI and other upstream indices. Adjacent to electricity, temperature-based futures failed as market participants found it more efficient to hedge the result of price swings (electricity) than the cause (temperature).

Today, compute is hedged by model providers via long-term rental agreements or joint ventures, often structured as take-or-pay arrangements, which exchange spot-price exposure for counterparty risk. Hyperscalers usually physically own their GPU installments. Long-tail providers, on the other hand, lack both the contractual leverage to secure preferential rental terms and the capital to build out their own verticalized infrastructure, so they bear the full brunt of spot market volatility. From a markets perspective, no substitute exists; however, players who control supply can hedge internally via vertical integration.

Where this leaves us

Adding up the scorecard, compute may be too early to sustain a robust futures market. It’s a market that has the volatility to attract speculators and the early settlement infrastructure to support trades, but lacks the supply fragmentation and standardization needed for genuine price discovery at scale.

The OTC side is where most of the trading occurs. Brokers are building price feeds. Ornn and Silicon Data are publishing indices. Group chat deals are being formalized into contract templates. This is not nothing, but it has yet to become a market in the way that WTI or PJM is. The volume is too thin, the contracts too bespoke, and the supply too concentrated for the existing infrastructure to clear at scale.

The right way to read the framework is as a diagnostic tool, not a verdict. It tells us what’s missing, not what’s impossible.

Open questions

The market will develop in ways we’re still uncertain about. We have a number of open questions with some working hypotheses. These hypotheses are loosely held and need further validation/invalidation. Below, we’ll lay out their steelmans.

Is the supply side of the market going to be more or less fragmented in 1-2 years?

We expect modest fragmentation. Neoclouds are bringing new capacity online faster than any other category. New regions are coming online as power becomes a binding constraint, which favors operators who can site capacity near cheap electricity rather than near existing hyperscaler footprints. Fortune 2000 enterprises are even propping up small-scale data centers. Expansion along this frontier is seemingly inevitable.

However, the standard business model relies on large-scale, long-term contracts with reliable counterparties such as hyperscalers and frontier labs. Cloud brokerage providers such as Hyperbolic and SF Compute counterposition by offering capacity on a per-hour basis. These businesses serve the long tail of compute demand from AI-native startups, application-layer companies running inference on open weights, and research labs without frontier-scale budgets. We believe the adoption of open weights in particular will lead to further fragmentation of capacity as supply de-verticalizes from frontier labs and hyperscalers.

How will standardization unfold?

Index providers are forming standards around GPU instance cost per hour. These feeds express rough estimates rather than exact prices. Instance prices vary on several axes, including region, chassis, and tenor, that make it difficult to have a standardized price. Chassis differentiation, in particular, is a result of data centers tailored for bespoke workloads and hyperscalers optimizing for ecosystem lock-in rather than uniformity across the market.

Standards emerge when there is uniform market demand. The WTI standard gained adoption because it serviced a broad spectrum of downstream refinements like gasoline, diesel, and jet fuel. Today, compute demand is driven by AI training and inference workloads. Training infrastructure is bespoke and optimized for long, compute-heavy jobs in large, centralized facilities, making the underlying compute instances virtually non-fungible. Inference infrastructure, on the other hand, requires simpler hardware specifications and less power than training clusters; it optimizes for latency, meaning infrastructure is spread across different regions rather than colocated. Inference is fungible and is expected to reach 65%+ of AI compute demand by 2029. We suspect infrastructure-level optimizations around compute that services this market will lead to uniform compute requirements across providers.

If chip-level instances still vary, other avenues for standardization may come in the form of hardware-level benchmarks. Nvidia created the MLPerf benchmark, which grades inference and training performance across a wide variety of model architectures. In this world, GPU instances are traded not on their hardware specifications, but on the quality and efficiency of their output.

What could prevent standards from emerging over the next 1-2 years?

We think walled gardens and bespoke workloads will kill attempts at standardization. Over the next 1-2 years, hyperscalers and frontier labs will push to maintain their dominance in AI infrastructure and model provision. Without a decoupling of the two, they will maintain hardware based on their own needs, which differ across companies. The adoption of new chip architectures will further fragment hardware specifications, making it difficult for standards to develop.

How will open weights gain meaningful adoption?

This is the easiest pathway for compute markets to form. The two core bottlenecks for these markets today are supply-side concentration and a lack of standardization. The broad adoption of open weights democratizes the ability to run inference. This, in turn, creates incentives for the formation of independent operators and leads to infrastructure optimizations tailored for these specific models. We saw the same story play out in Bitcoin mining, where open-source software led to many miners and standardization around hardware configurations.

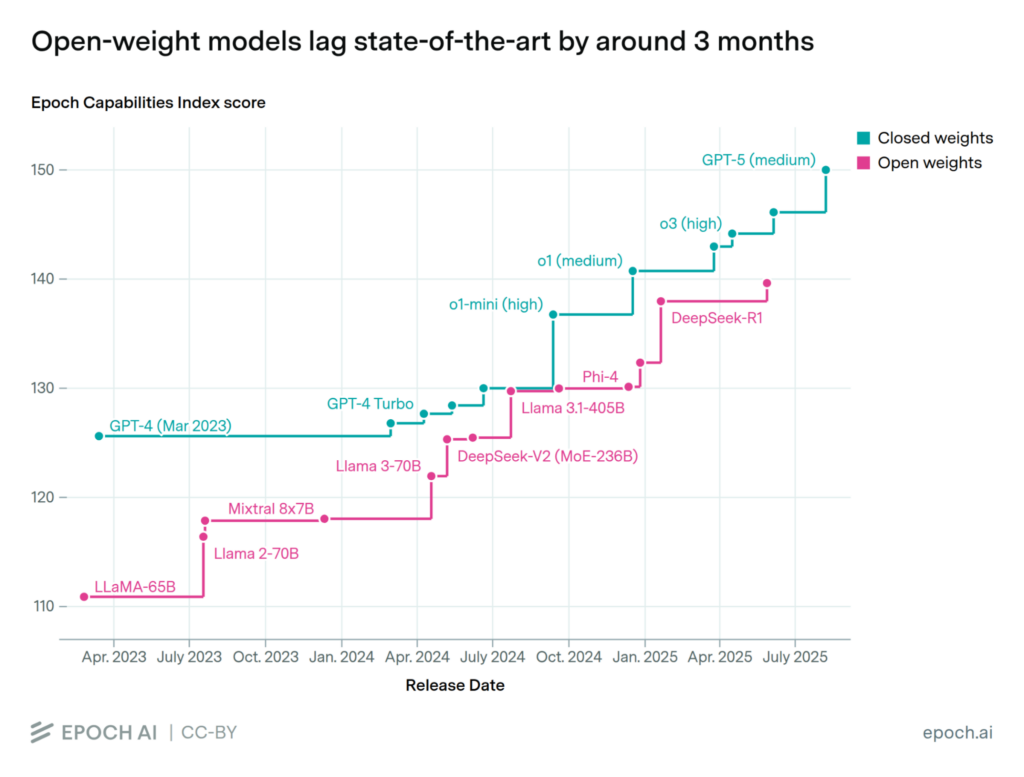

To date, open weights consistently trail closed weights in performance. That said, if the trend continues, open weights will soon reach the same performance thresholds we see in closed weights today. Businesses are already embedding closed weights throughout their systems and seeing a massive increase in productivity. In three months, this same productivity boost will be available at a fraction of the price. Most businesses, however, will likely continue opting for the best-performing model. We believe there will come a time when frontier closed weights are too expensive for the tasks they are delegated, and businesses will optimize intelligence across different models. It’s important to remember that frontier labs service this inference at a loss, and they will eventually need to increase prices to stay in business. When this time comes, open weights will have their moment.

What will be the ultimate unit of account traded?

Compute can be roughly broken down into three layers: chip, chip instance per hour, and tokens.

Chips are heavily supply-side concentrated. ASML has a monopoly on the lithography machines used by TSMC, which has a monopoly on the chip fabrication plants used by Nvidia, which has a monopoly on frontier chip design. Further, a chip is useless unless it’s plugged into a power supply and maintains high uptime. This leads us to believe that individual and deliverable chips will not be the ultimate unit of account.

Chip instance per hour is a function of the time period in which a chip can actually be used. This is arguably the most valuable state of a chip and the layer that we reference in this post. At this layer, compute as a commodity behaves similarly to electricity given there is enough demand centered on compute resources. We envision compute being traded similarly to electricity and other utilities: standardized in regional contracts (compute is a function of electricity) with spot markets and futures markets layered on top for hedging. In a chip-instance-per-hour format, this is achievable.

Tokens are downstream of compute instances and are also likely candidates for the ultimate unit of account. If tokens are the main driver of compute instances, markets for tokens create a way for the demand side to hedge costs and the supply side to lock in revenue. The supply side can hedge costs via continued long-term contracts or vertical integrations and remain concentrated. Tokens, however, are not uniform across models. Each has its own standard for chopping up text and leads to varied outputs, making them not perfectly fungible across use cases. That said, we are keeping a close eye on developments.

—

Thank you to Jesse Walden, Elijah Fox, Daniel Barabander, Kayvon Tehranian, Sabina Beleuz, and Simeon Bochev for their contributions to this framework, and to Siiri Ruuhela, Meltem Demirors, Kyle Morris, Saurabh Sharma, Andrawes Bahou, and many others for their thoughts and feedback.

Disclaimer

All information contained herein is for general information purposes only. It does not constitute investment advice or a recommendation or solicitation to buy or sell any investment and should not be used in the evaluation of the merits of making any investment decision. It should not be relied upon for accounting, legal or tax advice or investment recommendations. You should consult your own advisers as to legal, business, tax, and other related matters concerning any investment. None of the opinions or positions provided herein are intended to be treated as legal advice or to create an attorney-client relationship. Certain information contained in here has been obtained from third-party sources, including from portfolio companies of funds managed by Variant. While taken from sources believed to be reliable, Variant has not independently verified such information. Any investments or portfolio companies mentioned, referred to, or described are not representative of all investments in vehicles managed by Variant, and there can be no assurance that the investments will be profitable or that other investments made in the future will have similar characteristics or results. A list of investments made by funds managed by Variant (excluding investments for which the issuer has not provided permission for Variant to disclose publicly as well as unannounced investments in publicly traded digital assets) is available at https://variant.fund/portfolio. Variant makes no representations about the enduring accuracy of the information or its appropriateness for a given situation. This post reflects the current opinions of the authors and is not made on behalf of Variant or its Clients and does not necessarily reflect the opinions of Variant, its General Partners, its affiliates, advisors or individuals associated with Variant. The opinions reflected herein are subject to change without being updated. All liability with respect to actions taken or not taken based on the contents of the information contained herein are hereby expressly disclaimed. The content of this post is provided “as is;” no representations are made that the content is error-free.