Daniel Barabander

The Fraud Thesis

How Crypto and AI Are Breaking Fraud's Cost Curve

Crypto and AI will increase financial fraud.

Crypto’s enablement of internet bearer assets means fraudsters can now settle instantly, irreversibly, and without identity requirements, compressing the time to cash out while eliminating the clawback mechanisms that traditional rails provide. AI enables deception to be generated and deployed at scale, increasing the effectiveness of social engineering while eroding the reliability of existing authentication methods.

They each improve the unit economics of fraud. Better economics will result in a sustained rise in fraud — and a large opportunity for companies building the infrastructure to mitigate it.

Fraud Is a Business

When people think of fraud, they typically picture a person in a basement somewhere tricking people out of their money for sport. But that’s the wrong mental model. Fraud is better understood as a business — and an enormous one at that. Nasdaq estimates that fraud schemes totaled $485 billion in losses in 2023. Like any business, the goal is to maximize revenue while minimizing cost.

Things that increase revenue are:

- a high conversion rate of tricking victims

- fast, reliable settlement

- limited reversibility or clawback risk

Things that increase the cost are:

- operational overhead

- friction and chokepoints in moving or cashing out funds

- enforcement risk

As the bottom line improves, the business of fraud becomes more attractive, and more participants enter the market. Internet bearer assets and AI are drastically improving that bottom line.

Internet Bearer Assets

Up until the creation of the internet, most fraud operations could only target one type of asset: physical bearer assets. A bearer asset is one in which ownership is determined solely through possession. Cash is a quintessential physical bearer asset — someone hands you a dollar bill, and you become the legal owner merely by possessing it.

Fraudsters have historically had a bit of a love/hate relationship with the economics of physical bearer assets.

- In the plus column, physical bearer assets have instant and irreversible settlement and no identity requirements, which dramatically reduces both clawback and enforcement risk. And when the bearer asset is self-custodied, the holder is often solely responsible for protecting themselves because there is no external party incentivized to protect them. This increases conversion because value is pushed to the edges and most people are not trained in detecting fraud.

- However, in the minus column, defrauding people out of physical bearer assets is difficult to scale. The fraudster must be physically present, the asset must be physically transported, laundered, etc. Making this happen is expensive.

When the internet came along, it introduced a new asset for the fraudster to target: remotely accessible account-based assets. This fundamentally changed the economics of fraud but still represented somewhat of a mixed bag for fraudsters.

- On the one hand, account-based assets are not ideal for fraudsters because they introduce identity requirements. Identity adds another layer that must be spoofed and increases enforcement risk. The account-based relationship also creates high clawback risk, since an administrator maintaining the ledger of accounts can reverse transactions once fraud is detected. And because the administrator is legally responsible for safeguarding the asset (as a custodian), they are directly incentivized to invest in fraud detection and prevention systems. They also have a bird’s-eye view across all customer activity, allowing them to identify patterns and coordinate defenses. (The same logic applies to other centralized asset networks, like Visa.)

- On the other hand, the scalability benefits are massive. From anywhere in the world, the fraudster can wreak havoc on any other person in the world, programmatically. The fraudster can now live in a different jurisdiction, with no extradition, so even if the fraud was discovered, the administrator might not be able to do anything about it, significantly decreasing enforcement risk.

The net of all this is that, historically, the assets available to fraudsters forced a tradeoff: physical bearer assets offered finality but limited scalability, while account-based assets offered scalability but introduced reversibility and enforcement risk.

Then came crypto. The core innovation of crypto is the merger of the two: internet + bearer assets. People can own value simply through possession because that value is provably scarce, with all the efficiency of natively living on internet rails. This is the root of all the benefits we know and love about crypto. But it also inadvertently created a nearly perfect asset for a fraudster to target.

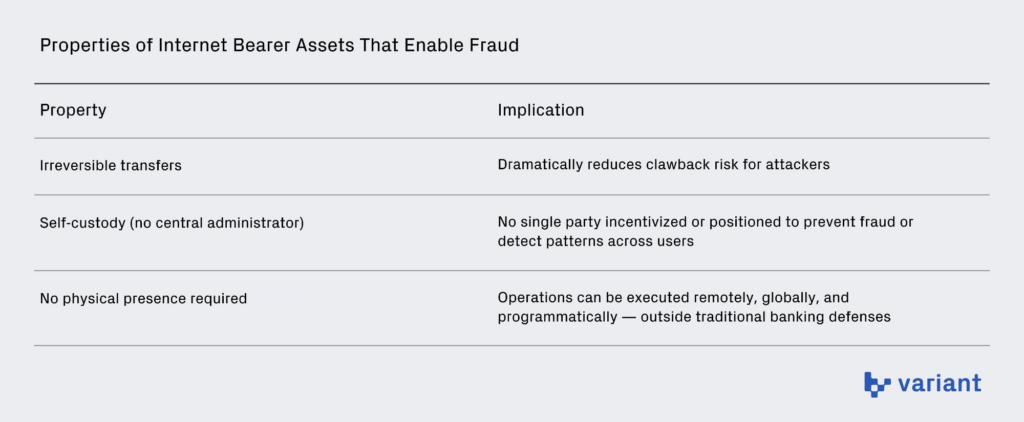

This is because internet bearer assets combine the best of the physical with the internet world:

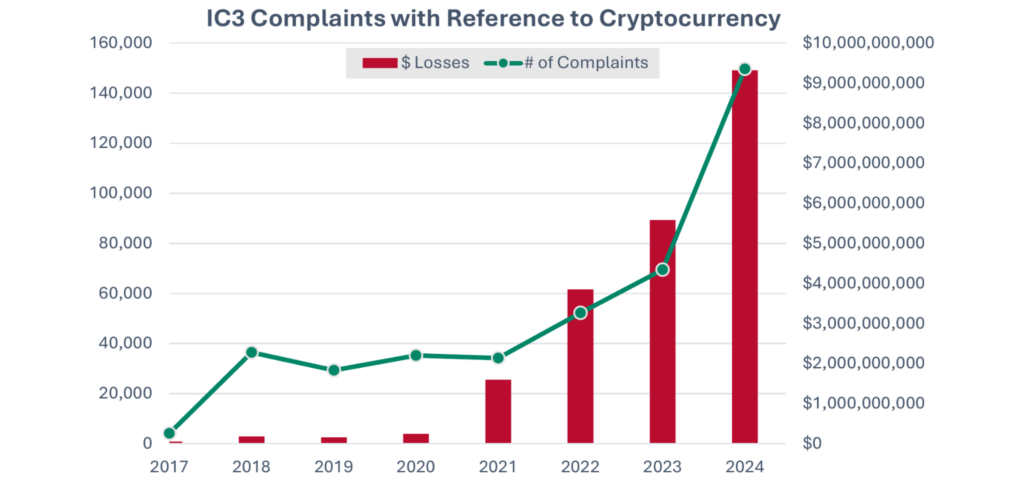

By increasing conversion, reducing clawback risk, and lowering operational cost, internet bearer assets shift the economics of fraud in favor of the fraudster. And unsurprisingly, data shows fraudsters are increasingly turning to crypto as the asset of choice. The FBI’s IC3 reports $16.6B in internet-crime losses in 2024, with approximately 83% attributable to “cyber-enabled fraud” — deception-based schemes such as investment, impersonation, and romance scams. Of that total, $9.3B involved complaints referencing crypto as the payment rail, a 66% increase from 2023.

In other words, crypto is not merely present in fraud — it has rapidly become one of its dominant settlement mechanisms. As internet bearer assets grow, so too does their appeal to fraudsters. And this dynamic does not depend on widespread consumer adoption. Even if crypto serves only as a settlement rail, its instant, irreversible nature improves the economics of fraud the moment value is converted onchain.

In other words, crypto is not merely present in fraud — it has rapidly become one of its dominant settlement mechanisms. As internet bearer assets grow, so too does their appeal to fraudsters. And this dynamic does not depend on widespread consumer adoption. Even if crypto serves only as a settlement rail, its instant, irreversible nature improves the economics of fraud the moment value is converted onchain.

AI

Fraud involves deception, which we can reframe as distorting reality. The more successfully you can distort reality, the more people you can scam. Until recently, distorting reality at scale was expensive, which limited fraudsters’ ability to execute large-scale attacks. But AI is now driving that cost toward zero in many instances. This should come as no surprise, as the canonical test to benchmark AI, the Turing test, is quite literally a test of distorting reality: an AI’s ability to pass as human.

Take social engineering as an example. Historically, these attacks were constrained by a tradeoff between static and dynamic content. Static content (like the Nigerian prince scam) is highly automatable and cheap to produce, but it is poor at distorting reality because it is not personalized — meaning conversion rates are low (i.e., most people don’t fall for it). Dynamic content (like building a “relationship” in pig butchering scams) is far more personalized and therefore much better at distorting reality, leading to higher conversion rates. But it has traditionally been expensive to scale, requiring manual effort to tailor the deception to each victim.

AI removes this tradeoff by automating dynamic content. Fraudsters can now combine the scalability of static outreach with the conversion benefits of personalized engagement. For example, in pig butchering schemes, thousands of agents can now be spun up simultaneously to carry on intimate, adaptive conversations with victims — something that previously required entire scam compounds to execute.

The same principles apply to AI’s ability to invalidate many of our existing authentication methods. When you upload a picture of your driver’s license to a platform as part of a KYC process, the intent is to prove you are who you say you are. The platform checks that the ID looks legitimate and cross-references the information you’ve provided against data from credit bureaus or data brokers. Historically, this worked reasonably well because faking coherence at scale was expensive. Creating a convincing picture of a driver’s license required real effort in Photoshop. Building a believable social media footprint and generating supporting artifacts (utility bills, phone records, employment history, etc.) required manual labor. The cost of distorting reality acted as a natural constraint on its effectiveness.

AI obliterates that cost structure because it makes fabricating those signals dramatically easier. ChatGPT can generate a convincing picture of a driver’s license in a couple of minutes. Agents can be used to automatically construct synthetic identities at scale, generating email histories, social media profiles, utility applications, phone numbers, employment records, and other digital breadcrumbs that many onboarding systems treat as credibility indicators.

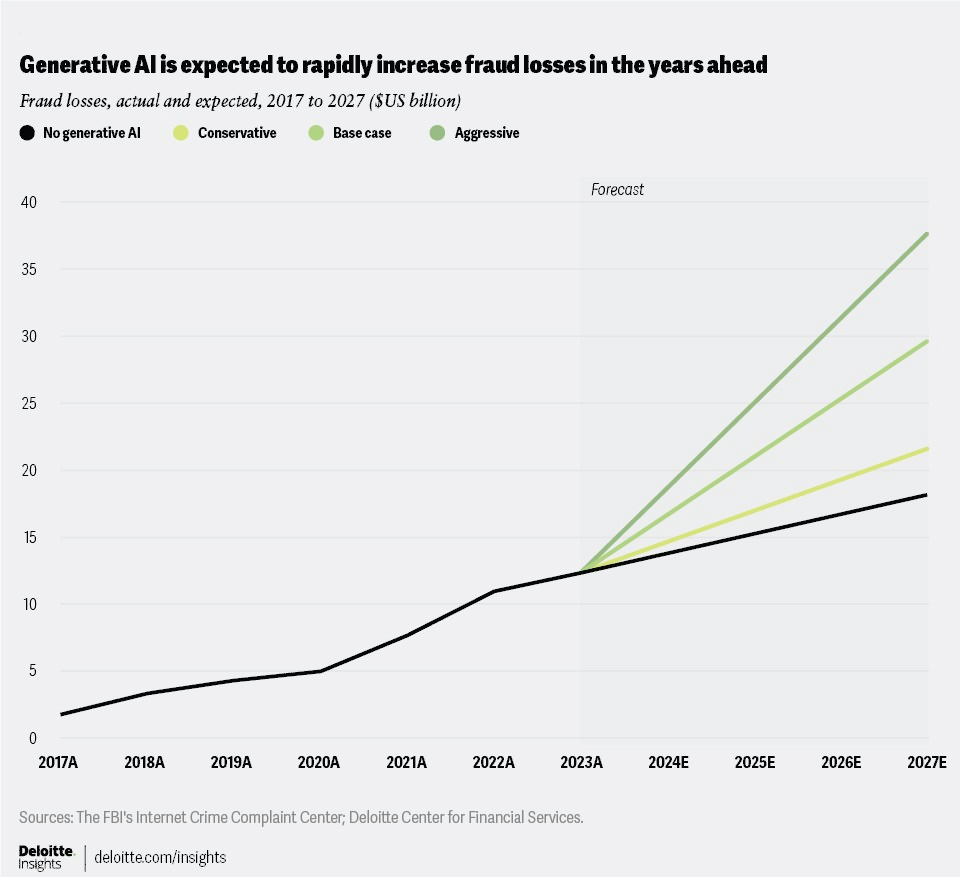

The upshot is that, much like internet bearer assets, AI is making the cost-benefit analysis of committing fraud more attractive than ever. Malicious phishing emails increased 1,265% and credential phishing increased 967% in the year following the launch of ChatGPT (Q4 2022–Q3 2023), a surge researchers attribute in part to AI. The use of AI in scams increased 456% between May 2024 and April 2025 compared with the same period the previous year. Deloitte projects that generative AI will enable fraud losses in the U.S. alone to reach $40 billion by 2027, up from $12.3 billion in 2023 — a 32% compound annual growth rate. These trends suggest that as the cost of generating believable deception continues to fall, the volume of fraud will continue to rise.

Investing in Anti-Fraud Tech

As depressing as this sounds, the investor in me smells opportunity. If the Fraud Thesis is correct, demand for anti-fraud technology will increase dramatically.

Broadly speaking, anti-fraud tech can be divided into two categories: preventing fraud and recovering from fraud. One of my goals in publishing this piece is to learn from founders about what kinds of infrastructure this new environment will require. But there are already two themes I’m particularly excited about investing in — one in each category: preventing fraud using cryptographic proofs of reality and recovering from fraud through asset-seizure networks.

(1) Preventing Fraud: Cryptographic Proofs of Reality

If the core concern with AI is that it dramatically reduces the cost of distorting reality, then we need new primitives grounded in reality that AI cannot easily spoof.

We actually already have the perfect substrate for these primitives: the physical world. AI can distort digital reality, but it cannot manipulate physical reality. The problem is we have failed to secure the bridge between the physical and the digital to make this substrate useful.

Our driver’s license/KYC example above is illustrative. We can understand that there are actually two authentication artifacts at issue: the physical driver’s license and the user’s picture of it. Spoofing a physical driver’s license is relatively expensive — someone would need specialized equipment, proper materials, and attention to detail. But spoofing a photograph of that license is now trivial because it lives in digital space at the whim of ChatGPT.

Crucially, the costs associated with spoofing the physical driver’s license are unaffected by AI, so it represents a great bulwark to its deceptive abilities. The problem is that the “cost as security” properties of this physical artifact are currently being lost in the conversion from physical to digital.

What we need are cryptographic proofs of reality. A cryptographic proof of reality takes guarantees from the physical world and wraps them in cryptography so their integrity is preserved in the digital world. As a result, the high costs of distorting reality in the physical world would be inherited in the digital world. This could dramatically reduce the effectiveness and cost of using AI to commit fraud.

This idea is not new. Cryptographic proofs of reality already have mainstream adoption in specific contexts. Your passport contains a chip with data digitally signed by the issuing government, attesting that you appeared in person and that the passport was legitimately issued. Customs officials verify that signature to confirm the data has not been altered. Similarly, EMV chips in credit cards generate a unique cryptographic response to prove that a genuine physical card — not just a copied card number — is present during a transaction.

These are great developments, but they are relatively limited because they are not internet native. At least in the U.S., your passport chip cannot be used to verify your identity when KYCing for a service — this is why you take a picture of it. The EMV chip is an even more stark example — it was literally created to reduce fraud (magnetic skimming of card numbers), yet once you go online, you generally do not use that tech and instead just manually enter numbers.1

What would cryptographic proofs of reality look like if they were internet native? World provides a representative example. You scan your iris at a location in the physical world, and the hardware cryptographically attests that this physical event occurred. That proof then lives digitally in your wallet and can be presented online wherever a physical-world guarantee is required — namely, that there is a unique human behind the account.

The work World is doing is important. But the design space for cryptographic proofs of reality is much larger than in-person authentication by a trusted issuer. We also need proofs that are (1) lower friction and (2) capable of attesting to a wider range of real-world facts.

One such primitive I’m really excited about is location. Someone’s location is a statement about physical reality; an individual is in only one place at a time. This fact is inviolate. Very few identity signals carry such a strong informational property — and it is precisely that property that fraud attempts to violate. If a user’s device is showing they are at X but an action is also being initiated from Y, there is a good chance fraud is occurring (usually, an account takeover). For these reasons, location is already one of the most common inputs into fraud detection systems — and one of the most attractive, because these checks happen in the background unbeknownst to the user.

But current location solutions treat location as a proxy, not a proof. Existing methods — IP address, GPS, WiFi positioning, cell triangulation — rely on a small number of passively received, unauthenticated signals. Because the device simply trusts whatever signal it receives, manipulating a few observable inputs is often sufficient to alter the result. This has produced a mature ecosystem of consumer-grade spoofing tools: VPNs, mock-location apps (the app “Fake GPS location” has 50m+ downloads on the Google Play Store), and low-cost signal simulators. Location suffers from the same analog-to-digital failure described above: physical presence is costly, but the signals we rely on to measure it do not inherit that cost into the digital layer.

If location could be guaranteed and cryptographically signed at origin, it would become a true proof of physical reality, transforming location from a heuristic risk signal into a frictionless, internet-native security primitive.

This is exactly what Octet is working on. Octet combines data from several sensors (accelerometer, gyroscope, barometer, magnetometer, and opportunistic signals) on your phone to calculate its position, then securely signs that result using your device’s protected hardware. To fabricate a valid proof, an attacker would need to spoof multiple independent signals coherently and sustain that spoofing over time — replicating real terrain, real movement, and real physical constraints. The cost of doing so becomes prohibitive. All of this runs natively on existing smartphones with no new hardware required.

The use cases for fraud prevention are limitless, but just a few to consider:

- Shopping (online and in-person): A shopper is known to be in NYC an hour ago, but a user for that account is now initiating a request in Russia. Because the shopping platform uses Octet in the background to verify all requests, we know this discrepancy represents fraud.

- Remittances: A husband in Ohio wants to send money to his wife in Mexico City. The remittance app asks the husband for the wife’s general location, and he selects Mexico City. The wife gets a link to draw down the funds but must first pass a check running Octet that she is in that location. Even if the wife is phished by a fraudster in Myanmar, the fraudster cannot pass this check, and the funds are safe.2

- Geofenced access controls: A company offers access to sensitive information for employees only at corporate headquarters. To unlock it, a device runs Octet to prove it is physically within that geofence. Physical presence becomes the access key.

- Wire approval: A CFO must approve all wires above $500K. Before a wire clears, the approver’s device must produce a presence proof showing it is physically at headquarters. A business-email-compromise attacker in Romania steals the CFO’s credentials and submits a wire request over the threshold. Their device cannot produce a proof of presence at headquarters. The wire is halted automatically.

(2) Recovering from Fraud: Asset Seizure and Recovery (“Crypto Kill Chain”)

If internet bearer assets improve fraud by eliminating reversibility and compressing settlement time, then recovery infrastructure must reintroduce clawback risk. We therefore need coordinated systems that can trace, freeze, and recover assets once they have been stolen through fraud.

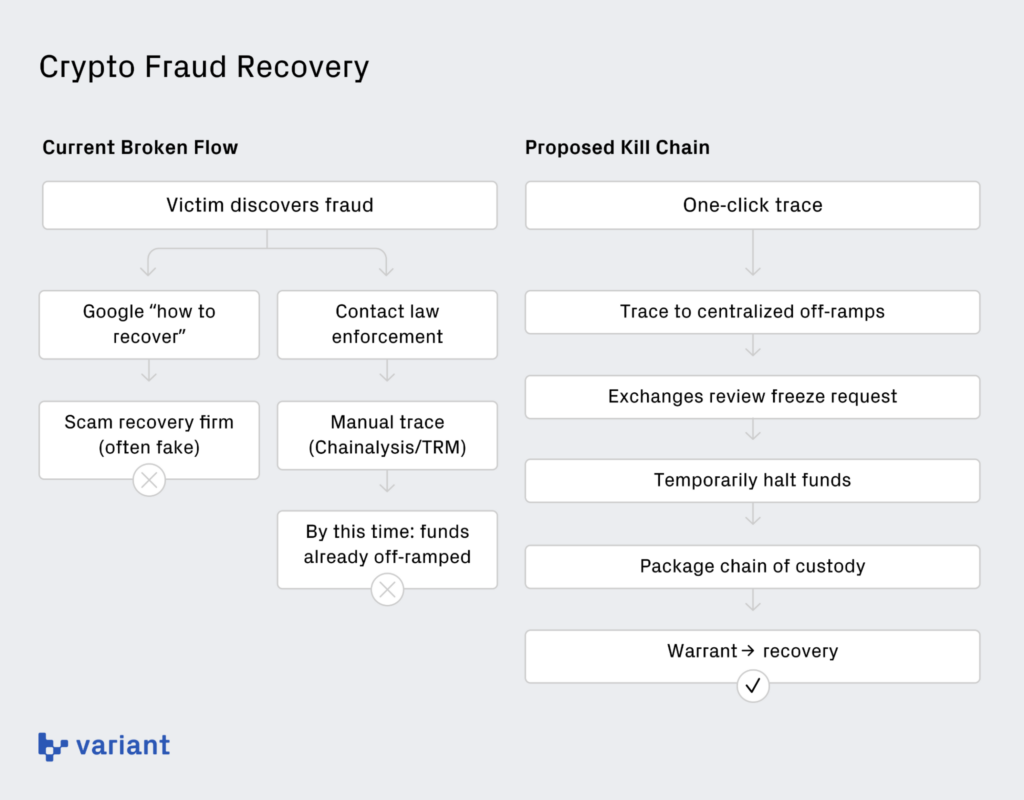

Our current methods for recovery in crypto are shockingly ad hoc and ineffective — especially when compared to traditional banking rails. In the legacy system, decades of infrastructure built on the Bank Secrecy Act, SAR reporting, and correspondent banking relationships created standardized pathways for freezing suspicious funds and coordinating with law enforcement. Crypto has no equivalent coordinated recovery layer — there is no unified, interoperable system designed for rapid victim asset recovery. That gap represents an enormous opportunity for a company to build a true recovery network — a “crypto kill chain.”

To see how broken things are, take the typical flow of “recovery” for a victim of pig butchering. The victim sends the crypto (it’s almost always crypto with pig butchering) and shortly thereafter realizes they’ve been duped. Rightly freaking out, the victim generally does two things.

First, they Google “How to recover crypto funds” and are brought to an independent investigations firm. While many of these firms are legitimate, many are themselves scams, promising recovery and then disappearing or providing useless reports (such as showing the funds travel onchain then doing nothing further). Victims are especially vulnerable at this moment because they are desperate for answers and not thinking clearly, so it represents a perfect opportunity to double-dip on the victim.

Second, they (or the investigations firm) contact local or federal law enforcement. If the agency even has access to tools like Chainalysis or TRM (which is a big “if”), a detective will typically conduct a manual, hop-by-hop trace of the crypto. The process is slow and resource-intensive, and by the time the analysis is complete, the attacker has often already off-ramped or further laundered the assets. This is not accidental: these tools were built primarily for compliance workflows — answering generalized questions like whether a wallet has interacted with sanctioned addresses — not for rapid, victim-level recovery tracing from a specific loss to a specific fiat off-ramp.

What we need instead is a true kill chain that will seamlessly trace, freeze, and recover assets. This is what Heights Labs is working on. With one click, law enforcement can trace the flow of funds from the victim to centralized off-ramps like exchanges. Those off-ramps integrate into the kill chain network, allowing them to seamlessly review incoming freeze requests and temporarily halt funds while further analysis is conducted. Law enforcement can then package the full chain of custody (the complete flow of crypto) into a warrant for a judge to sign and ultimately recover assets.

Taking this a step further, we can imagine a world where there is a “Crypto 911” for end users who can directly interface with the software to kick off the process and only pay if their funds are recovered (similar to apps that fight traffic tickets). A crypto kill chain like this could dramatically increase clawback and enforcement risk, restoring a key cost that internet bearer assets removed.

More Solutions Are Coming

The field of anti-fraud infrastructure is wide open. We will need infrastructure for monitoring (like what Blockaid is working on), insurance, autonomous fraud-hunting agents — the list goes on. An entire ecosystem of companies will emerge to rebalance the economics that now favor attackers.

The broader principle is the takeaway. Fraud scales when conversion increases, clawback risk declines, and operational costs collapse. The response cannot be incremental. It requires structural investment in technologies that either reduce conversion or raise the cost of fraud.

I discussed two new technologies in this piece. Cryptographic proofs of reality raise the cost of committing fraud. Crypto kill chains raise the cost of getting away with it. Together, they attack the unit economics of fraud from both sides.

If the Fraud Thesis is correct, the next decade will not simply see more fraud. It will see the construction of a new security layer designed to contain it.

If you’re building in this space — whether it’s cryptographic proofs of reality, asset recovery, fraud monitoring, or something I haven’t thought of yet — I’d love to hear from you. Shoot me a DM.

Footnotes

- EMV can certainly be used during an online payment to ensure that the purchaser has the physical card. Since most phones can now read a proximity EMV card, the payment processor can require the purchaser to tap their physical card against the phone during a risky online transaction. This could provide something much closer to a cryptographic proof of reality. But it’s currently rarely implemented because of the friction.

Google Pay/Apple Pay provide a good example of an internet-native approach to a cryptographic proof of reality. - A sophisticated fraudster could attempt to hire a local “mule” within a target geography to bypass a geofence. But doing so materially increases operational cost, coordination complexity, and enforcement risk. The narrower and more personalized the geofence (e.g., tied to a specific home address), the harder it becomes to source reliable local mules at scale.

Thank you to Benjamin Fels (Octet), Daniel Goldsmith (Heights Labs), David Stearns, Mike Mosier (Arktouros and ex/ante), Zoe Weinberg (ex/ante), Zack Shapiro (Rains), Noah Levine (a16z), and Larry Sukernik (Reverie) for their thoughtful comments and feedback on this article.

All information contained herein is for general information purposes only. It does not constitute investment advice or a recommendation or solicitation to buy or sell any investment and should not be used in the evaluation of the merits of making any investment decision. It should not be relied upon for accounting, legal or tax advice or investment recommendations. You should consult your own advisers as to legal, business, tax, and other related matters concerning any investment. None of the opinions or positions provided herein are intended to be treated as legal advice or to create an attorney-client relationship. Certain information contained in here has been obtained from third-party sources, including from portfolio companies of funds managed by Variant. While taken from sources believed to be reliable, Variant has not independently verified such information. Any investments or portfolio companies mentioned, referred to, or described are not representative of all investments in vehicles managed by Variant, and there can be no assurance that the investments will be profitable or that other investments made in the future will have similar characteristics or results. A list of investments made by funds managed by Variant (excluding investments for which the issuer has not provided permission for Variant to disclose publicly as well as unannounced investments in publicly traded digital assets) is available at https://variant.fund/portfolio. Variant makes no representations about the enduring accuracy of the information or its appropriateness for a given situation. This post reflects the current opinions of the authors and is not made on behalf of Variant or its Clients and does not necessarily reflect the opinions of Variant, its General Partners, its affiliates, advisors or individuals associated with Variant. The opinions reflected herein are subject to change without being updated. All liability with respect to actions taken or not taken based on the contents of the information contained herein are hereby expressly disclaimed. The content of this post is provided “as is;” no representations are made that the content is error-free.